Cheerleader Effect: Why You Look Better in Group Photos

TL;DR: The McGurk effect proves perception is constructed, not recorded: when you see lips form 'ga' but hear 'ba,' your brain creates 'da.' This 1976 discovery reveals how the brain fuses sensory input, with implications for AI, hearing aids, and understanding consciousness itself.

Close your eyes and listen to someone say "ba." Now open them and watch their lips while they speak. Simple, right? But what happens when your eyes and ears disagree? In 1976, psychologists Harry McGurk and John MacDonald stumbled onto something extraordinary: show someone a video of lips forming "ga" while playing the sound "ba," and most people will swear they heard "da," a sound that never existed. This illusion, now called the McGurk effect, shattered the assumption that we passively record reality. Instead, it revealed that perception is a construction job, and your brain is the architect making judgment calls you never knew were happening.

McGurk and MacDonald weren't looking for an illusion. They were studying infant speech perception when a technical error led them to dub mismatched audio onto video clips. When they watched the flawed recordings, something bizarre happened: the syllables they heard didn't match what the audio track contained. Their brains had fused the visual and auditory information into something entirely new. The finding was so striking they published it in Nature under the title "Hearing lips and seeing voices," and the scientific community took notice.

Why was this groundbreaking? Because it demonstrated conclusively that speech perception isn't purely auditory. We don't just listen to words, we watch them. The brain automatically weighs visual information from lip movements against acoustic signals, and when the two conflict, it doesn't simply choose one or the other. Instead, it creates a compromise percept, a third option that tries to make sense of the contradictory evidence. This was radical. It meant that one of the most fundamental human abilities, understanding speech, operates through active multisensory integration rather than passive sound reception.

The McGurk effect proves that speech perception isn't just about what you hear - your brain automatically fuses what you see with what you hear, creating an entirely new perception that never actually existed in either sense alone.

So what's actually happening in your head when you perceive "da" instead of "ba"? Neuroimaging studies have pinpointed the superior temporal sulcus (STS), a region tucked into the side of your brain, as the convergence zone where auditory and visual speech information meet. When you hear speech, your auditory cortex processes the sound waves. Simultaneously, your visual cortex analyzes lip shapes and movements. These separate streams flow into the STS, which acts like a referee weighing the evidence from both senses.

Here's where it gets interesting. According to research from Baylor College of Medicine, the brain uses a form of Bayesian causal inference to make these decisions. Essentially, your brain maintains a massive internal database built from tens of thousands of speech examples you've encountered throughout your life. This database contains probability mappings: how likely is it that a particular mouth shape goes with a specific sound?

When faced with the McGurk stimulus (visual "ga" plus auditory "ba"), your brain calculates the likelihood that these signals came from a single speaker versus two different sources. Since the visual and auditory cues are somewhat compatible, just not perfectly aligned, the brain decides they probably came from one person and tries to find a compromise. The result? "Da" emerges as the best-fit solution, a phonetic middle ground that partially satisfies both inputs.

"All humans grow up listening to tens of thousands of speech examples, with the result that our brains contain a comprehensive mapping of the likelihood that any given pair of mouth movements and speech sounds go together."

- Dr. Michael Beauchamp, Professor of Neurosurgery, Baylor College of Medicine

This causal inference model doesn't just explain the McGurk effect, it predicts when integration will happen and when the brain will keep the senses separate, matching human behavior better than simpler models that always fuse audiovisual cues.

If you've tried the McGurk effect online and didn't experience it, you're not alone. Studies report that anywhere from 30 to 80 percent of participants perceive the illusion, with wide variation depending on the specific stimuli and testing conditions. So why the differences?

Individual susceptibility appears to depend on several factors. One is the relative quality of your auditory versus visual input. If you're getting poor-quality sound but clear visuals, your brain places more weight on what it sees, making you more susceptible to the McGurk effect. This makes intuitive sense: your brain is essentially asking, "Which sense should I trust more right now?" and adjusting the integration accordingly.

Age plays a role too. Infants as young as four to five months show McGurk-like responses, suggesting the capacity for audiovisual integration develops early, before extensive language exposure. But the strength of the effect can change across the lifespan as sensory systems mature and, later, potentially decline with aging.

Neurodevelopmental differences matter as well. Research indicates that children and adults with autism spectrum disorders often show reduced susceptibility to the McGurk effect compared to neurotypical peers. This finding aligns with broader observations that autism involves differences in multisensory integration. For some individuals on the spectrum, the brain may process visual and auditory information more independently rather than automatically fusing them.

Recent work has even found that environmental noise during development can shape audiovisual integration. Artificial neural networks trained on noisy speech show increased McGurk-like responses, suggesting that exposure to auditory noise, moderate noise in particular, might enhance the brain's reliance on visual cues. This raises intriguing questions about how growing up in noisy environments versus quiet ones might subtly shape the way we perceive speech.

The McGurk effect isn't universal in the same way across all cultures and languages. Cross-linguistic studies reveal that Japanese, Chinese, and English speakers experience the effect differently, with variations in both the frequency and strength of the illusion. Why? Languages differ in their phonemic inventories, the set of distinct sounds they use. A phonetic fusion that makes sense in English might be impossible or rare in Japanese, so speakers of different languages may integrate audiovisual cues in language-specific ways.

Cultural factors play a role beyond just the sounds themselves. Some researchers have noted that cultures with greater face-avoidance norms, where direct eye contact and intense gaze at the mouth might be less common, show weaker McGurk effects. This suggests that even low-level perceptual integration can be modulated by lifelong social practices. If you spend less time watching people's mouths, your brain might not weight visual speech cues as heavily.

These cross-cultural findings tell us something profound: the McGurk effect isn't purely a hardwired reflex. It's shaped by experience, both linguistic and social. Your brain's internal probability mappings reflect the specific audiovisual statistics of the language and culture you grew up in.

The strength of the McGurk effect varies dramatically across cultures and languages - your perception is shaped not just by biology, but by the specific linguistic and social environment you grew up in.

The McGurk effect is more than a quirky illusion; it's a window into the brain's fundamental operating principles. Modern neuroscience increasingly embraces the idea that perception is a form of controlled hallucination. Rather than passively recording sensory input like a camera, the brain actively generates predictions about what it expects to perceive, then uses incoming sensory data to confirm or revise those predictions. This framework, called predictive processing or predictive coding, has gained traction because it explains phenomena like the McGurk effect elegantly.

From this perspective, your perception of "da" arises because your brain's prediction machinery is trying to reconcile conflicting evidence. It generates a hypothesis ("this person is saying something"), weighs the visual and auditory evidence against its learned probabilities, and settles on "da" as the most probable interpretation. Crucially, this happens below the level of conscious awareness. Even when you know about the illusion, the fusion persists. You can't simply decide to hear "ba" when watching "ga" lips. The integration is automatic, embedded in the architecture of perception itself.

This resistance to conscious override is what makes the McGurk effect so compelling as a teaching tool. It forces us to confront the fact that our subjective experience, what we're absolutely certain we heard, can be wrong. Your ears delivered "ba," but your brain reported "da," and there's nothing you can do about it. This has philosophical implications too. If even basic sensory experiences are constructed rather than directly transmitted, what does that mean for our grasp on objective reality?

"Using data from a large number of subjects, the model with causal inference better predicted how humans would or would not integrate audiovisual speech syllables."

- John Magnotti, Researcher, Baylor College of Medicine

Understanding the McGurk effect has moved far beyond academic curiosity. Researchers at Baylor College of Medicine note that insights into multisensory speech integration can inform the design of hearing aids and cochlear implants. Traditional hearing devices focus almost exclusively on amplifying or clarifying auditory signals, but if the brain naturally integrates visual speech information, why not enhance that process?

Future hearing assistance technologies might incorporate visual cues, either by training users to pay more attention to lip movements or by using augmented reality systems that highlight relevant visual features. The causal inference model developed by Beauchamp and colleagues provides a computational roadmap, potentially guiding algorithms that balance auditory and visual input in ways that match how the brain actually works. For aging populations experiencing auditory decline, enhancing the visual component of speech perception could offer significant benefits.

Speech therapy is another frontier. Therapists working with individuals who have auditory processing difficulties might leverage the McGurk effect by strengthening visual speech cues during rehabilitation. If someone struggles to distinguish certain phonemes auditorily, consciously attending to lip shapes could compensate.

Then there's artificial intelligence. Modern speech recognition systems are increasingly multimodal, combining audio analysis with lip-reading. Artificial neural networks trained on audiovisual speech data now exhibit McGurk-like behavior, spontaneously fusing conflicting cues much like humans do. Researchers discovered that even networks trained entirely on congruent (matching) audiovisual speech will show the McGurk percept when tested with incongruent stimuli. More strikingly, training these networks on noisy speech increases their visual reliance and amplifies McGurk responses, paralleling what might happen in human development.

This isn't just a curiosity. It suggests that AI systems designed to understand speech in noisy environments, think smart assistants in crowded rooms or autonomous vehicles processing driver commands, benefit from integrating visual information. The McGurk effect demonstrates that this strategy isn't just clever engineering, it's how biological brains solve the same problem.

The shift to remote work and video conferencing has made audiovisual communication more central to daily life than ever before. But video calls introduce new variables: latency, compression artifacts, low resolution, and visual glitches. How does the McGurk effect play out when the audiovisual synchrony is imperfect?

Some evidence suggests that temporal asynchrony between audio and video reduces the McGurk effect. If the sound and image are even slightly out of sync, the brain is less likely to fuse them, presumably because the causal inference system detects the mismatch and decides the signals probably came from different sources. This means video call lag might actually protect you from McGurk-style illusions, but at the cost of making speech perception more effortful overall. You're forced to rely more heavily on audio alone, without the natural boost that visual cues provide.

Visual quality matters too. Blurry or pixelated video makes lip movements harder to read, reducing the visual signal's reliability. Your brain adjusts by down-weighting vision, but this again removes a valuable source of information, especially in noisy acoustic environments. As virtual communication technology continues to evolve, understanding these trade-offs could guide improvements in compression algorithms, display fidelity, and synchronization protocols.

Video call lag and poor visual quality don't just annoy you - they actively disrupt your brain's natural audiovisual integration, making speech perception more effortful and less accurate.

There's also an intriguing future possibility: augmented communication systems that artificially enhance visual speech cues in real time, making lip movements more salient or even generating synthetic visual speech matched to audio when video is unavailable. Such systems would effectively reverse-engineer the McGurk effect, using knowledge of how the brain integrates senses to optimize communication.

No discussion of the McGurk effect would be complete without addressing the replication debate. In 2020, a large-scale replication study found that only about 29 percent of participants reported fusion responses to the classic McGurk stimulus. This is substantially lower than the 80-plus percent rates reported in some earlier studies. What explains the discrepancy?

Part of the answer may lie in methodology. The original McGurk studies used carefully crafted stimuli, often with specific talkers, lighting, and recording conditions. Replication efforts using different stimuli, online testing environments, or diverse participant pools might naturally produce different results. Individual variability is higher than early studies suggested, and factors like attention, stimulus quality, and even the specific phonetic contrasts used can all shift the effect's prevalence.

Does this mean the McGurk effect is less robust than once thought? Not necessarily. It means that the effect is highly context-dependent, sensitive to the details of how it's elicited. Rather than diminishing the phenomenon's importance, these replication challenges have spurred more nuanced investigations into what conditions maximize audiovisual integration and why some people and some stimuli produce stronger illusions than others.

Current research is also exploring open questions that go beyond the basic illusion. How do attention and cognitive load modulate the effect? Can training increase or decrease susceptibility? What role do individual differences in sensory processing styles play? And as virtual and augmented reality technologies create entirely new kinds of audiovisual environments, how will the brain's integration mechanisms adapt?

One of the most remarkable aspects of the McGurk effect is how accessible it is. You don't need a lab to experience it. A quick search for "McGurk effect demonstration" on YouTube will yield dozens of videos where you can see and hear the illusion firsthand. Try watching a few with your eyes open, then closing your eyes and listening to the audio alone. Many people are startled to discover that what they were certain they heard with eyes open sounds completely different with eyes closed.

For those who don't experience the effect strongly, try varying the conditions: adjust the volume, watch on a larger or smaller screen, or test yourself when you're tired versus alert. The effect's variability is part of what makes it fascinating. It's a visceral reminder that perception isn't a simple readout of the world. It's a sophisticated inference process, shaped by context, expectation, and the idiosyncrasies of your own brain's wiring.

The McGurk effect teaches us humility about our own perceptions. We like to think we experience the world as it truly is, that our senses provide direct, unfiltered access to reality. The McGurk illusion proves otherwise. Your brain is constantly making judgment calls, weighing probabilities, and constructing a perceptual narrative that feels seamless and certain even when it's objectively wrong.

This has implications that ripple far beyond speech perception. If your brain constructs something as basic as hearing a syllable, what about more complex perceptions and judgments? How much of your experience of the world is inference rather than direct input? The McGurk effect suggests that the line between sensation and interpretation is far blurrier than intuition suggests.

As research continues, the McGurk effect remains a powerful tool for probing the mysteries of multisensory integration, individual differences in perception, and the brain's predictive machinery. It bridges basic neuroscience, clinical applications, and cutting-edge AI research. Most importantly, it reminds us that the brain is not a passive receiver but an active builder of experience. What you see really does change what you hear, and that's not a bug in the system, it's a fundamental feature of how human perception works. Your brain isn't lying to you, exactly. It's just doing what it evolved to do: making the best guess it can from the imperfect, ambiguous signals the world provides, one constructed syllable at a time.

Solar sail spacecraft navigate the solar system by tacking on sunlight, angling reflective sheets to redirect photon pressure just as sailboats tack against the wind. Missions like IKAROS and LightSail 2 have proven the physics works, and next-generation designs could enable interstellar travel.

Metformin, a cheap diabetes drug taken by 150 million people, may slow aging by mildly stressing cells through mitochondrial complex I inhibition, triggering protective AMPK pathways. The landmark TAME trial is now testing this in humans, potentially making aging itself an FDA-treatable condition.

Scientists have identified 16 climate tipping elements that can trigger each other in cascading chain reactions. New research shows five may already be at risk at current warming, and crossing just one threshold can double the number of systems that collapse.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

Snowshoe hares change coat color based on day length, not snow. As climate change shortens snow seasons, white hares stand out against brown landscapes, increasing predation by 7% per week. Some populations carry a borrowed gene for staying brown, offering a potential genetic lifeline.

Care workers earn poverty-level wages despite performing essential labor worth trillions globally. Historical gendering of domestic work, flawed economic models, and systemic racism entrench this undervaluation, but evidence from Nordic countries and union organizing shows that treating care as infrastructure produces massive economic returns.

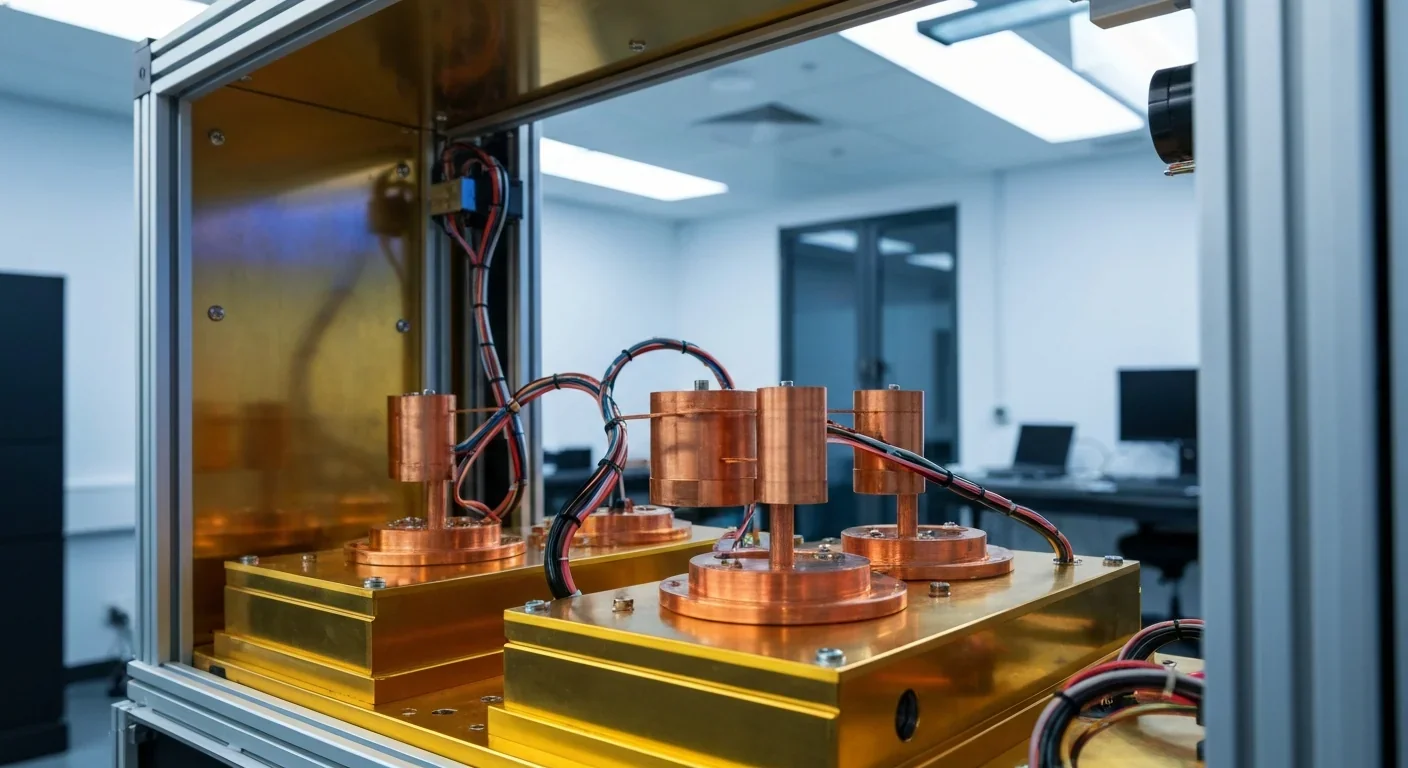

Google, IBM, and every major quantum computing company converged on the transmon qubit because it offered the best trade-off between noise immunity and manufacturability. Flux qubits survive in D-Wave's annealers, and hybrid fluxonium designs may soon challenge transmon dominance.