Cheerleader Effect: Why You Look Better in Group Photos

TL;DR: Motion parallax, the brain's ability to extract 3D depth from head movement alone, is a powerful monocular depth cue now driving innovations from Apple's iOS spatial effects to glasses-free 3D displays and inclusive VR design for people with impaired stereo vision.

Close one eye and look around the room. Now move your head side to side. Despite losing your binocular vision, the world still looks satisfyingly three-dimensional. That persistent sense of depth, the one you've relied on every time you've caught a ball, navigated a crowded sidewalk, or parallel parked, comes from a perceptual phenomenon so fundamental that your brain performs it millions of times a day without you noticing. It's called motion parallax, and it might be the most underappreciated superpower in your visual toolkit.

Here's the basic idea: when you move, nearby objects appear to slide quickly across your field of view while distant ones barely budge. Lean left while riding a train and the fence posts rush past, but the mountains on the horizon seem almost stationary. Your brain reads those different speeds, what neuroscientists call a retinal motion gradient, and reverse-engineers a map of how far away everything is.

This makes motion parallax a monocular depth cue, meaning it works perfectly well with just one eye. That's a crucial distinction from stereopsis, the process where slight differences between left-eye and right-eye images get fused into a sensation of 3D. Motion parallax operates on completely different input: your own movement through space. As one Verywell Health explainer describes it, motion parallax "contributes to your sense of self-motion" and "occurs when you move your head back and forth." Closer objects move in the opposite direction of your head motion, while faraway objects move with it.

What makes this cue remarkable is how early it develops. Research on depth perception development suggests that monocular depth cues like motion parallax emerge earlier in infancy than binocular stereopsis, which typically doesn't kick in until around 3 to 5 months of age. Babies are reading motion before they can even fuse their two eyes' images into stereo depth.

Motion parallax works with just one eye. Your brain extracts depth by comparing how fast objects move across your retina when you shift position, making it one of the most versatile depth cues in your perceptual arsenal.

The scientific story of motion parallax stretches back to Hermann von Helmholtz, the 19th-century polymath who catalogued virtually every mechanism of human vision. Helmholtz recognized that the shifting views created by head movement provided distance information independent of two-eyed vision. But it took decades for researchers to pin down exactly how powerful this cue could be.

A landmark moment came in 1953, when psychologists Hans Wallach and D.N. O'Connell published their experiments on the "kinetic depth effect." Using nothing more than rotating wire shapes and their cast shadows, they showed that flat, featureless silhouettes suddenly snapped into vivid 3D objects the moment they began to move. In one experiment, 12 of 16 subjects reported seeing depth after just a single 10-second exposure to a rotating shadow. The key insight was that the sequence of changing images, not any single frame, created the depth.

This principle wasn't just academic. Filmmakers had already been exploiting it. In the late 1930s, Walt Disney's studio developed the multi-plane camera, a towering apparatus that stacked layers of hand-painted artwork on separate glass panes at varying distances from a vertical camera. When the camera moved, foreground layers shifted faster than background layers, producing a parallax effect that gave two-dimensional animation a breathtaking sense of depth. The opening sequence of Snow White and the Seven Dwarfs used this technique to pull audiences into the forest canopy.

Meanwhile, inventors were attacking the problem from the hardware side. In 1901, Frederic Eugene Ives created the first functional autostereoscopic image using a parallax barrier, a precisely engineered grating of fine slits placed in front of a print. By blocking certain viewing angles, the barrier directed different image strips to each eye, producing a glasses-free 3D effect. This century-old concept would eventually power devices like the Nintendo 3DS, proving that parallax-based depth technology has deeper roots than most people realize.

"The kinetic depth effect takes place when a flat image is moved, producing a sequence of retinal images that the brain interprets as 3D depth."

- Hans Wallach & D.N. O'Connell, Psychologists, 1953

So what actually happens when retinal motion hits your visual cortex? The processing pipeline is surprisingly well-mapped. Light entering your eye is first handled by V1, the primary visual cortex at the back of your skull, which detects basic features like edges and orientations. But the heavy lifting for motion-based depth happens downstream, in a brain region called MT, also known as V5.

Neurons in area MT are tuned to both the direction and speed of visual motion, forming the neural basis of parallax depth computation. Research confirms that MT, rather than V1, is "directly involved in the generation" of depth-from-motion perception. These neurons fire differently depending on whether a moving pattern indicates something closer or farther away, essentially computing a depth sign from pure velocity information. Studies show the temporal threshold for this processing sits between 50 and 85 milliseconds, roughly the time it takes to measure velocity reliably.

What's particularly elegant is how the brain handles its own movement. For a long time, neuroscientists assumed the visual system simply subtracted self-generated motion as noise. But research from Dr. Gregory DeAngelis at the University of Rochester Medical Center tells a different story. His team found that the brain actively uses motion generated by its own eye movements to calculate where objects are and how far away they sit. Rather than being a nuisance signal, the optical flow created by your own saccades becomes a rich source of depth data.

This explains something counterintuitive: in certain active navigation tasks, motion-based depth cues can actually outperform binocular stereopsis. A study by Simon Rushton and colleagues demonstrated that retinal motion distribution, not depth order from binocular disparity, was the primary factor determining steering accuracy. Their analysis showed that binocular depth cues didn't improve performance at all, with an ANOVA yielding no significant effect across disparity conditions.

In steering tasks, researchers found that retinal motion distribution outperformed binocular depth cues entirely. The brain doesn't always need two eyes to navigate, it just needs movement.

If motion parallax only needed one eye and a little head movement, why couldn't a flat screen fake it? That question has driven display engineers for decades, and the answers are now showing up in your pocket.

The simplest approach is the one Apple revived with iOS parallax wallpapers and, more recently, the Spatial Scenes feature in iOS 26. Tilt your iPhone, and AI-generated depth layers shift at different rates, creating a subtle but convincing sense that you're peering through a window rather than staring at glass. Apple's iOS 26 beta 2 extended this with a parallax effect across wallpapers, notifications, and app icons, using accelerometer data to simulate the motion cue. It's not true 3D, but your brain doesn't care. It sees differential motion and fills in the depth.

Not everyone loves it, though. A Reddit thread about the iOS 26 Liquid Glass interface garnered over 2,500 upvotes, with users reporting that the parallax glow on app icons made them feel dizzy and disoriented. This is a useful reminder that motion parallax is deeply wired into vestibular processing, and when the visual cue conflicts with what your inner ear expects, the result can be genuinely nauseating.

More ambitious systems track your actual head position. Daniel Habib, a former Meta engineer, built True3D Labs, a platform that uses a standard front-facing camera to detect facial landmarks and estimate six-degree-of-freedom head pose. The system reprojects the scene in real time, making the display behave "like a window into a 3D world," with no glasses required. The rendering pipeline uses volumetric video techniques including voxels and Gaussian splats, building on a lineage that includes Johnny Lee's famous 2007 Wii Remote demo.

On the industrial side, autostereoscopic displays have matured significantly. Modern systems using lenticular lenses can encode 8 to 16 discrete viewing angles per lens element, allowing a viewer's perspective to shift smoothly as they move. BOE, one of the world's largest display manufacturers, is developing naked-eye 3D panels using both parallax barriers and light field technology for wide viewing angles. Even analog approaches endure: lenticular printing in advertising holds viewer attention for up to 30 seconds compared to roughly 1 second for flat posters.

"The system reprojects the scene in real-time, allowing the display to behave like a window into a 3D world. No glasses are required, and the illusion of depth is created entirely through motion parallax."

- Daniel Habib, True3D Labs

Cinema has leveraged motion parallax from its earliest days. Early filmmakers intuitively placed foreground objects between the camera and the action, so that even a simple dolly shot created differential motion between layers. As one analysis notes, filmmakers "strategically use this effect to heighten drama, reveal key characters, and gently lead your eyes to the most important parts of the frame."

The technique reached blockbuster sophistication with films like Inception, where camera-tracked parallax and layered depth mapping made surreal architectural folds feel photographically real. Modern post-production tools allow editors to create depth maps, grayscale images where brightness indicates distance, that separate footage into parallax-responsive foreground, midground, and background layers. Done well, the result transforms flat shots into spaces you feel you could step into.

But the real magic of cinematic parallax is psychological, not just visual. When a camera pushes past a rain-streaked window toward a character, the differential speed between the water drops and the face behind them creates an involuntary sense of intimacy. Parallax doesn't just show depth; it structures narrative flow and emotional engagement.

Perhaps the most significant real-world application of motion parallax research is for the roughly 5-10% of the population who lack normal stereo vision. Conditions like amblyopia and strabismus can disrupt binocular depth perception, forcing the brain to rely more heavily on monocular cues. Research confirms that individuals with impaired stereoscopic vision lean heavily on motion parallax to navigate their environment successfully.

The Rushton study's finding that retinal motion distribution alone can drive accurate steering suggests an intriguing possibility for inclusive design: VR and AR systems could prioritize motion-based depth cues over stereoscopic rendering for users who can't benefit from binocular disparity. Since motion parallax requires only camera tracking and scene reprojection, not dual-view rendering, it could actually simplify the computational pipeline while serving a broader user base.

Within the next decade, you'll likely interact with motion parallax technology daily without thinking about it. Head-tracked parallax on laptops and tablets will make video calls feel like conversations through a window. Lenticular signage will continue expanding in retail and advertising. And autonomous vehicles already use structure-from-motion algorithms, the computational cousin of biological motion parallax, to build real-time 3D models of their surroundings from camera feeds.

The deeper lesson is about the brain itself. We tend to think of depth perception as a solved problem, something that just works because we have two eyes. But motion parallax reveals that vision is less about receiving an image and more about actively constructing a model of the world through movement. Your brain doesn't wait for depth information to arrive. It generates it, every time you shift your gaze.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

Bacteroides thetaiotaomicron uses 88 specialized gene clusters and over 260 enzymes to decode and digest dietary fibers humans can't break down, converting them into essential short-chain fatty acids. When fiber runs out, it eats your gut's protective mucus instead, with cascading health consequences.

Scientists are restoring Ice Age ecological dynamics through rewilding projects like Siberia's Pleistocene Park and de-extinction efforts by Colossal Biosciences. These initiatives aim to reintroduce megafauna or their proxies to repair broken ecosystems, protect Arctic permafrost, and slow climate change.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

The Hawaiian bobtail squid farms bioluminescent bacteria in a specialized light organ to erase its shadow in moonlit waters. This partnership, where bacteria reshape the squid's body and communicate through quorum sensing, is teaching scientists how host-microbe relationships work and inspiring new medical and biotech applications.

Millions are leaving social media platforms driven by privacy scandals, mental health concerns, and algorithmic manipulation. While 60% relapse within a week, those who stay away report dramatically improved wellbeing, and decentralized alternatives like Bluesky are surging.

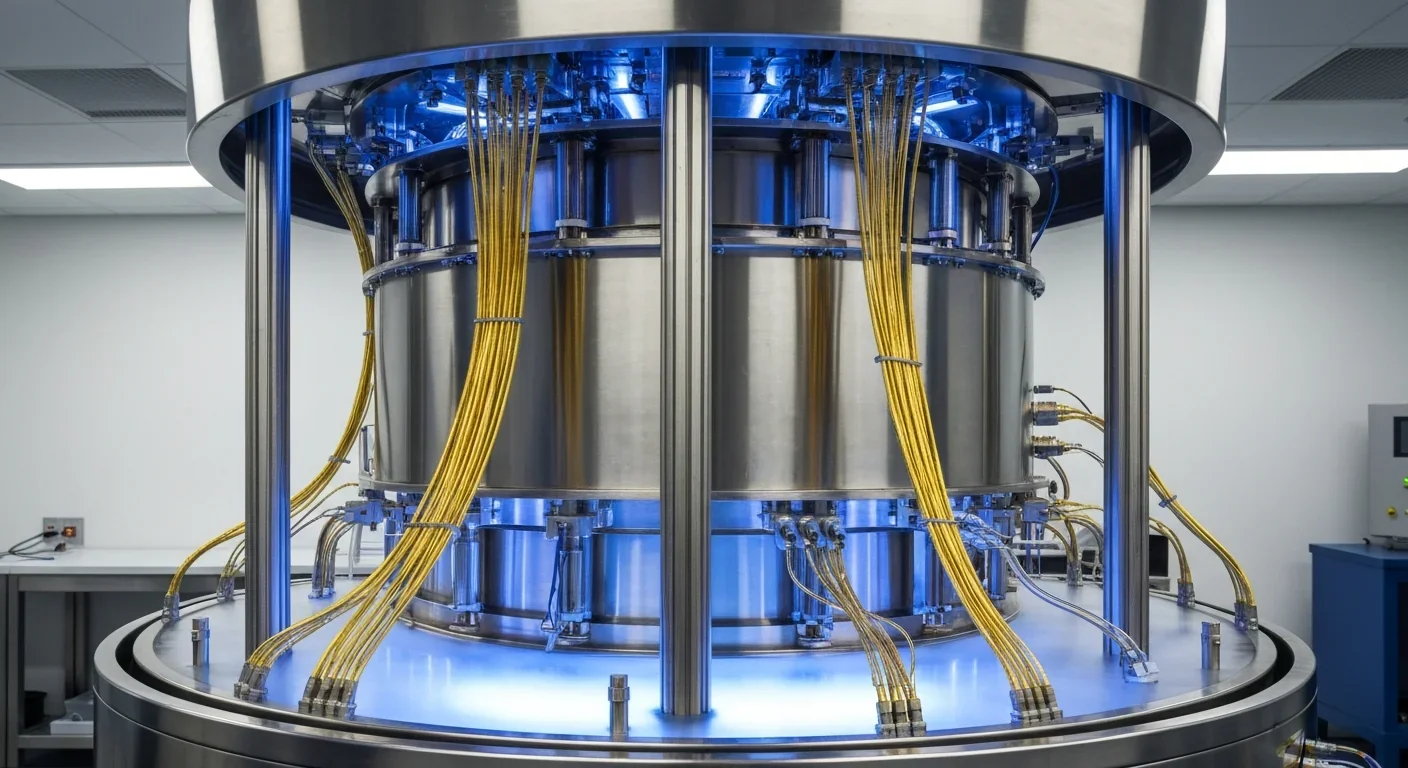

Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.